How does Elasticsearch work? And why should you use it? Let's take a tour of this notable search engine and explain how you can begin to use it to transform your daily activities and improve data clarity.

Why Elasticsearch

If you're human, surely you've needed to search for something (or someone...) on the world wide web, right? Maybe you were looking for documentation, an article, or even a coded response on Stack Overflow for that bug of yours.

Now, remember the frustrating feeling of simply not finding what you're looking for? This is the problem Elasticsearch sets out to solve: search!

To explain Elasticsearch in simple terms, we can say that it will help us find what we are looking for.

Image courtesy of CodeChain.

So, What Is It?

Elasticsearch is a database that provides distributed searches for and analysis of different types of data, almost in real time. It functions as an open source search engine built on top of Apache Lucene. The evolution of Apache Lucene to host Elasticsearch is reflective of the evolution of everything within software engineering: it requires a shorter learning curve. Simplicity and productivity are best friends.

Elasticsearch supports many programming languages, including:

- Java

- JavaScript

- Go

- .NET

- PHP

- Perl

- Python

- Ruby

How it Works

Elasticsearch supports a large volume of data without lowering the quality of its performance. It can be deployed on any system, regardless of platform, by providing a REST API. Furthermore, Elasticsearch is highly scalable, being able to go from one server to many simultaneous servers.

Elasticsearch performs inverted index searches, which works as follows:

- The moment a document is indexed, Elasticsearch separates all its terms into tokens.

- It then takes a measurement to define which tokens are relevant, thus eliminating articles, prepositions, etc.

- Elasticsearch's next step is to organize the tokens into an index and report in each token which documents contain that token.

- When a search is conducted, it will act on this inverted index instead of digging through each document individually, looking for the search terms.

This indexing process is what makes Elasticsearch a semi-real-time search engine.

It's relevant to note that Elasticsearch is not a business intelligence tool, but you can perform queries by aggregating data to generate graphs and thereby get some important insights for your business.

Key Benefits

One of the biggest benefits of using Elasticsearch is that it is highly scalable and available due to its structure of nodes and clusters. Just for the sake of teaching: a cluster is a group of node instances that are connected so that we can distribute tasks, searches, and indexing. The nodes in the Elasticsearch cluster can be assigned different jobs or responsibilities, as illustrated below:

- Data Nodes — store data and execute data-related operations, such as search and aggregation;

- Master Nodes — perform cluster-wide management and configuration actions, such as adding and removing nodes;

- Client Nodes — forward requests to the master node and data-related requests to data nodes; and

- Ingest Nodes — pre-process documents before indexing.

Beyond the high scalability and availability enabled by this structure, Elasticsearch is remarkable because:

- It enables fast full-text search.

- It provides security analysis and infrastructure monitoring.

- It can scale to thousands of servers and handle petabytes of data.

- It can be integrated with Kibana to provide real-time visualization of Elasticsearch data to access application performance and monitor logs and infrastructure metrics data.

- It allows you to use machine learning to automatically model the behavior of your data in real time.

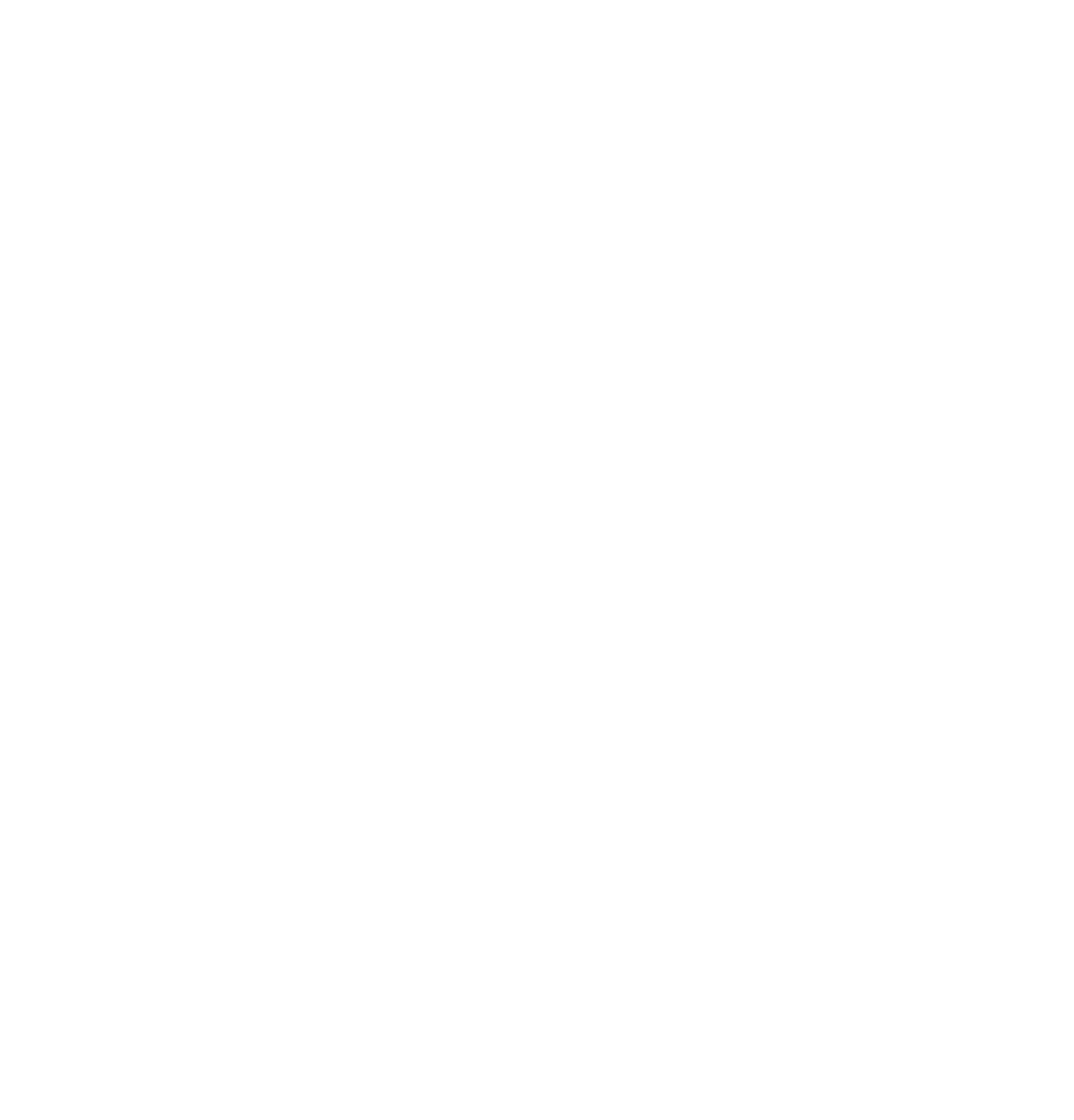

Elasticsearch Ecosystem

Kibana

Kibana is an elasticsearch data analysis and visualization tool that works as a dashboard for data presentation. It provides options for building queries and presenting results. Kibana also provides some options for managing Elasticsearch, such as authentication and security.

LogStash

As its name suggests, LogStash was originally created to process log records and send them to Elasticsearch, but today it has evolved into a more complete tool. Now it is used to synchronize data from different sources with Elasticsearch, such as a MySQL database. LogStash also does data enrichment, such as getting geographic data from an IP.

Beats

Beats is a collection of agents that can be installed in specific places to send information to Elasticsearch. For example, you can have a Windows agent that sends data related to the operating system (such as memory consumption, processing, logs and various other information), and the same can be done with Linux and other platforms such as WildFly or NGINX.

Elasticsearch Installation

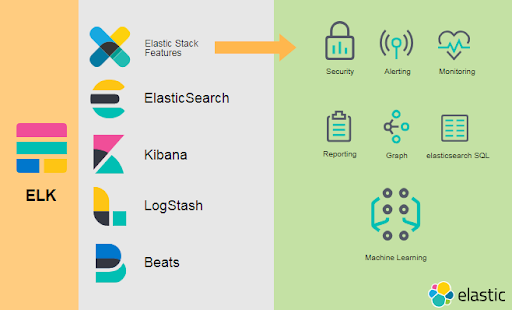

There are a lot of ways to install Elasticsearch, including through Docker, but for now we will focus on the standard: Install according to your operating system on the Elasticsearch website.

Please Note: To install Elasticsearch, you must already have Java 8 (JVM) installed on your computer. If you are not familiar with Java, be aware that you need to install the JRE -- the runtime to run a Java application --which you may already have installed on your machine. You can learn more about these downloads here.

Image courtesy of DevMedia.

Image courtesy of DevMedia.

In the image above, you can see that we're using the version 6.4.2 of ElasticSearch for Windows.

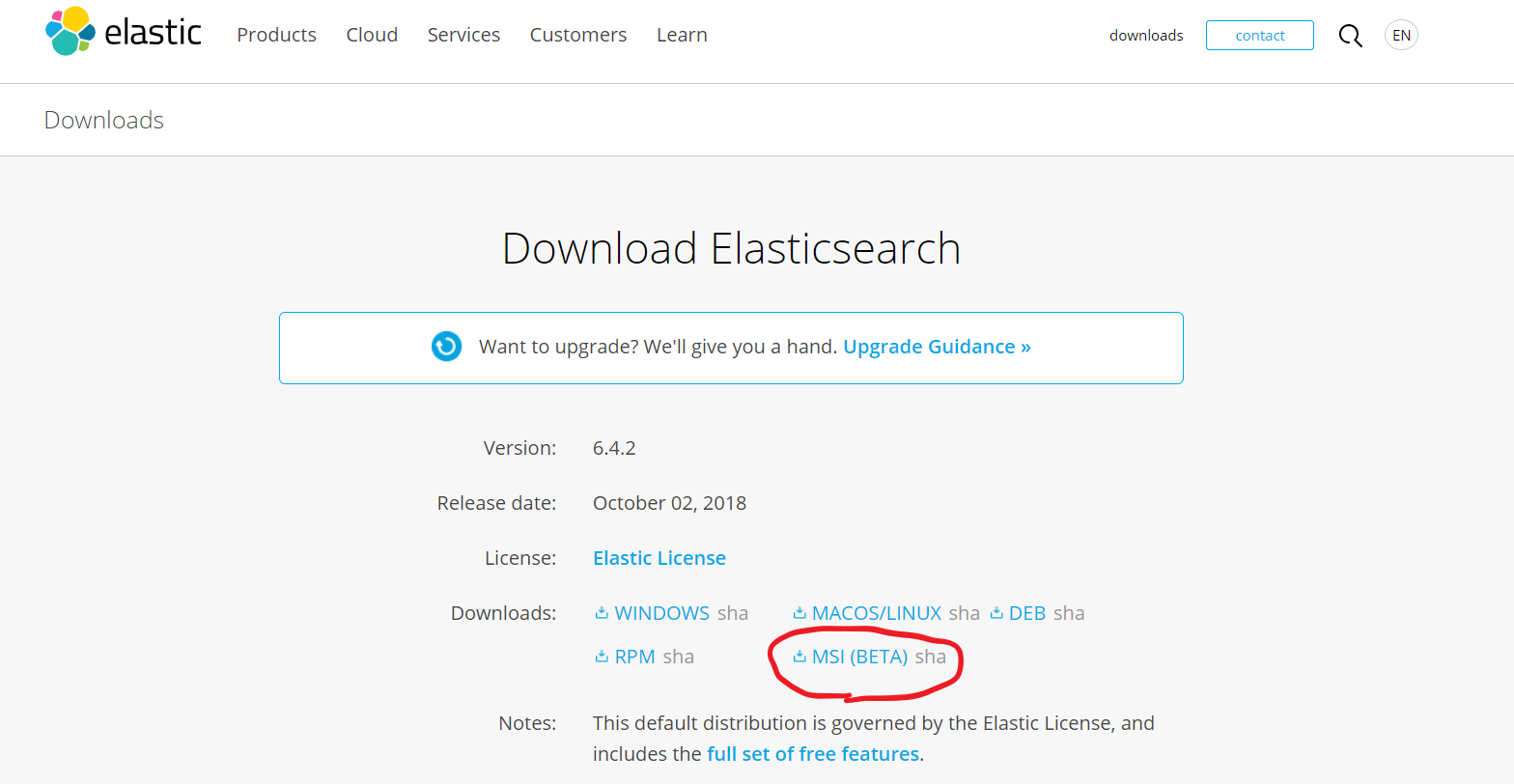

Image courtesy of DevMedia.

Image courtesy of DevMedia.

There is no need to change directories.

Image courtesy of DevMedia.

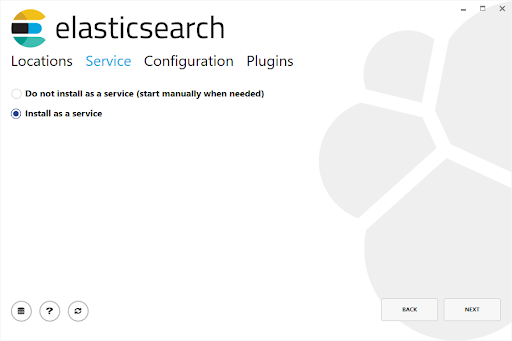

Select "Install as a service."

Image courtesy of DevMedia.

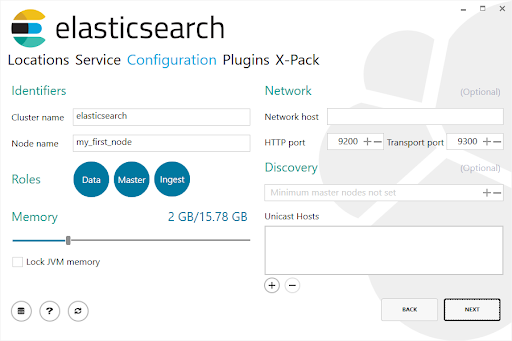

Here you have access to advanced settings.

Image courtesy of DevMedia.

Image courtesy of DevMedia.

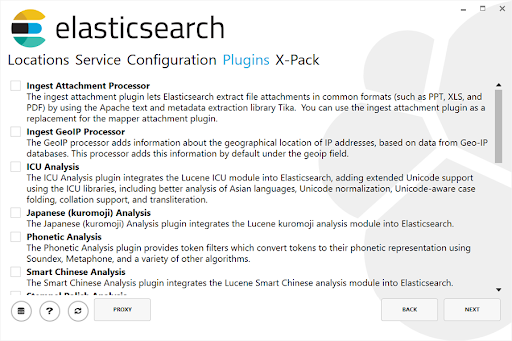

At this point, you can install additional plugins if that is of interest to you.

Image courtesy of DevMedia.

Image courtesy of DevMedia.

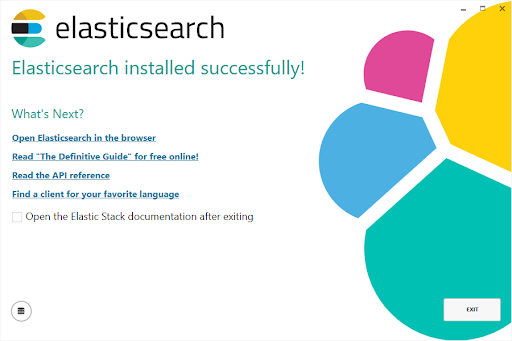

And voilá, this is our final installation screen!

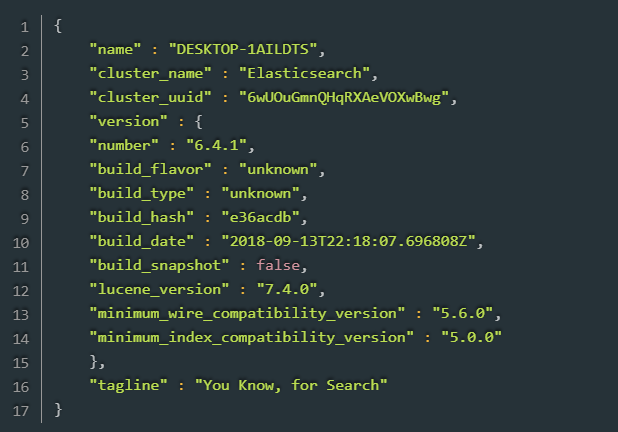

Usually, when we use ElasticSearch's own rest API on default port 9200, we get a response like this one (version 6.4):

Kibana Installation

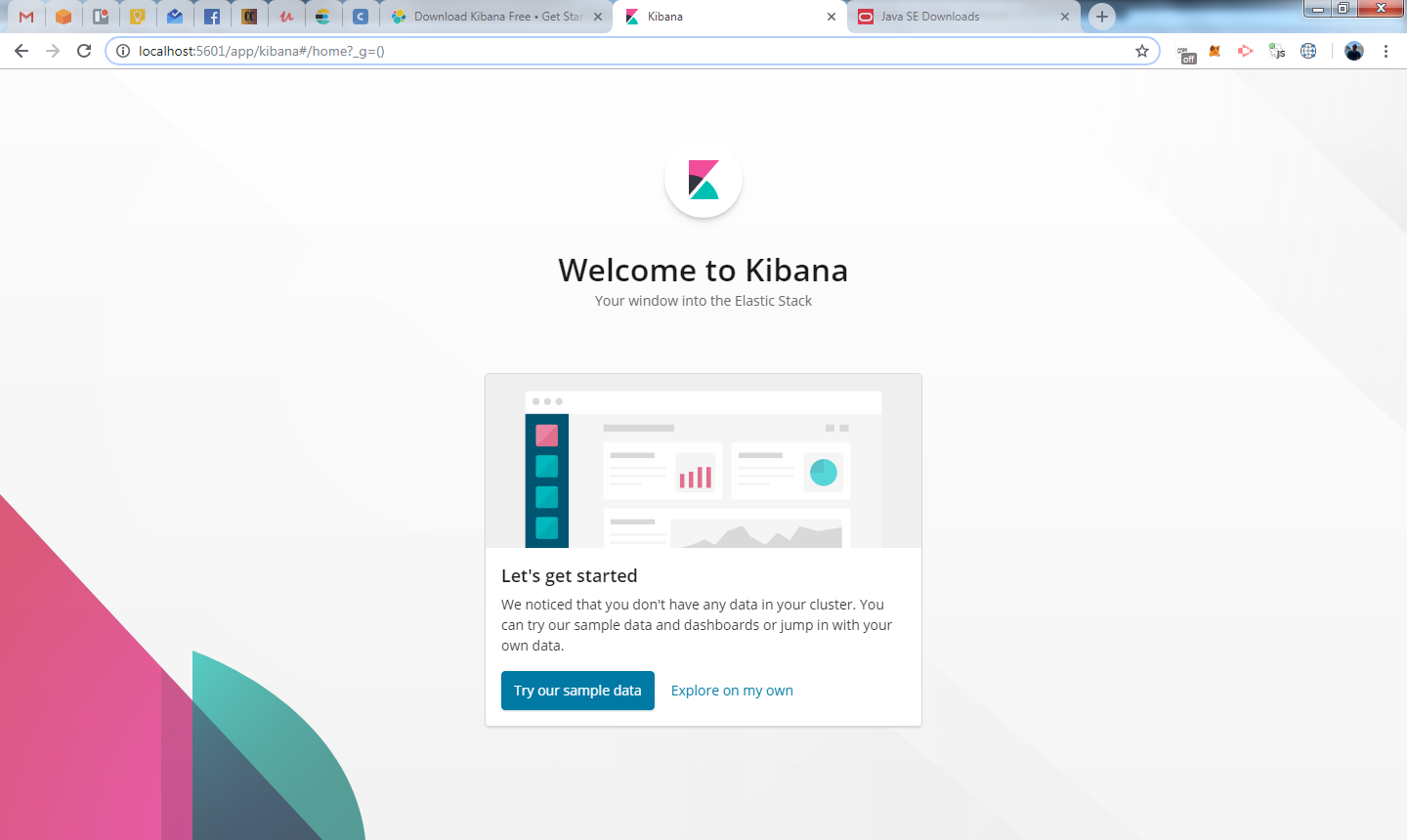

To install Kibana, follow the steps outlined on the Elasticsearch site and extract the zip file. Note: the standard port for Kibana is 5601! (localhost:5601). After installation, just execute kibana.bat and open localhost:5601 to see this:

Image courtesy of CodeChain.

Image courtesy of CodeChain.

You can access sample data to learn more about Kibana and understand its features. But for now we won't cover this information.

A Quick Recap

In today's post, we gave a quick introduction to the objectives, benefits, inner workings, and ecosystem of Elasticsearch, and now we have an installed version of Elasticsearch on our O.S.

If you have questions or just want to share your favorite thing about Elasticsearch, please feel free to engage in this discussion by adding your comment below!

References

- "The heart of the free and open Elastic Stack." Elastic.

- "ElasticSearch para todos - Parte 1." Cateno Viglio Junior, CodeChain.

- "Beginner's guide to understanding the relevance of your search with Elasticsearch and Kibana." Lisa, Dev.

- "O que e Elasticsearch?" DevMedia.

- "Introducao ao Elasticsearch." Thiago S. Adriano, XP Inc.

- "Add and remove nodes in your cluster." Elastic.

Author

Anderson Weber

Anderson Weber is a Software Engineer at Avenue Code. He is passionate about data, A.I., startups, and the financial market.